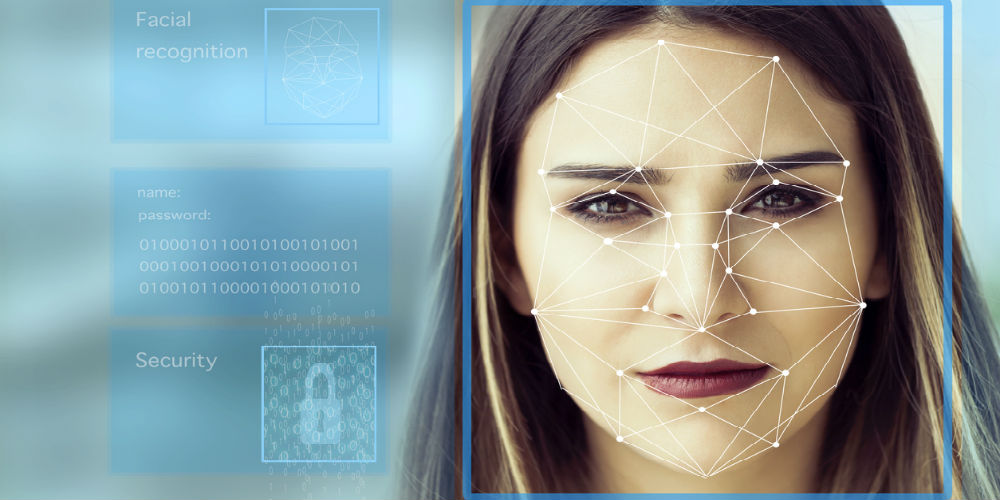

Facial recognition is being floated as a technology that could help in the wake of the coronavirus, but some major cities aren’t sold on the technology. Now, you can add Boston to that list.

The capital of Massachusetts just became the second largest community in the world to ban the use of facial recognition surveillance technology in the city, including prohibiting any city official from obtaining facial surveillance by asking for it through third parties, according to WBUR.

The Boston-based public radio station reported on the city council’s Wednesday meeting during which councilors cited a study from the Massachusetts Institute of Technology that found the technology was an error rate of up to 35% for darker-skinned women.

Councilor Ricardo Arroyo, who sponsored the bill along with Councilor Michelle Wu, noted the technology is wildly inaccurate for people of color. A MIT study found that for darker skinned women, facial analysis programs had an error rate of up to 35%.

Read Next: Facial Recognition Has a COVID-19 Problem

“It has an obvious racial bias and that’s dangerous,” Arroyo said. “But it also has sort of a chilling effect on civil liberties. And so, in a time where we’re seeing so much direct action in the form of marches and protests for rights, any kind of surveillance technology that could be used to essentially chill free speech or … more or less monitor activism or activists is dangerous.”

During a hearing earlier this month, Boston Police Commissioner William Gross said the current technology isn’t reliable, and that it isn’t used by the department.

“Until this technology is 100%, I’m not interested in it,” he said.

“I didn’t forget that I’m African American and I can be misidentified as well,” he added.

Other facial recognition bans

Boston joins five other Massachusetts cities that banned facial recognition, including Somerville, Brookline, Northampton, Springfield and Cambridge.

Elsehwere in the country, several cities in California have banned the technology, including San Francisco, Berkeley, Alameda and Oakland.

Amazon recently halted plans to let law enforcement use Rekognition, the tech giant’s facial recognition technology, for one year due to ethics concerns. That followed a similar decision by IBM.

Earlier this year, UCLA dropped its plans for facial recognition on campus because of the tech’s inaccuracy when analyzing faces of minorities.

Facial recognition developers clearly have some work to do before any widespread adoption is considered in the U.S.

If you enjoyed this article and want to receive more valuable industry content like this, click here to sign up for our digital newsletters!

Leave a Reply